data? Archive

How much data am I using on my smartphone

Loknath Das

Comments off

Most cell phone carriers, like AT&T, T-Mobile, Verizon, and Sprint, have data usage limitations. Unless you have an unlimited data plan, exceeding the

Apple to attend meeting promoting easy access to health data

Loknath Das

Comments off

An Apple representative is slated to attend a meeting on Monday held by the Carin Alliance, a nonpartisan group currently advocating to

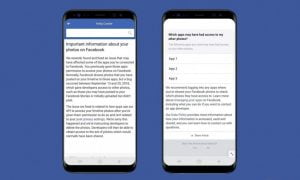

New Facebook data leak allowed apps broader access to 6.8 million users’ photos

Loknath Das

Comments off

It looks like the year couldn’t end without yet another Facebook scandal. This time around the company has come clean about discovering

Airtel’s latest Rs 2,199 broadband plan offers 1,200GB data at 300Mbps internet speed

Loknath Das

Comments off

Airtel on Monday launched a new data plan of Rs 2,199 per month under its V-fiber Broadband Model for those looking for high-data consumption

Apple served with warrant for Texas shooter’s iPhone and iCloud data

Loknath Das

Comments off

Texas Rangers have served Apple with a search warrant for data from deceased Sutherland Springs gunman Devin Patrick Kelley, who killed

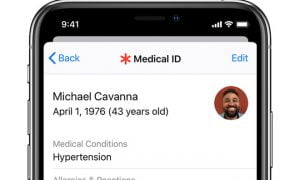

Why people trust Apple with their health data more than Google or Amazon

Loknath Das

Comments off

Getty Images Tim Cook was the second highest-paid executive of 2016, pulling in $150,036,907 Would you trust a technology company like Apple,

Apple to set up its first data centre in China

Loknath Das

Comments off

Please use the sharing tools found via the email icon at the top of articles. Copying articles to share with others is

Big data applications

Subhadip

Comments off

Richard J Self, Research Fellow – Big Data Lab, University of Derby, examines the role of software testing in the achievement of

Google Outlines the Amazing Opportunities of Data in New Report

Subhadip

Comments off

As we get caught up in the daily excitement of the latest trends, functionalities and changes in social media and digital marketing,

Right to be Forgotten: Protection of privacy or breach of free data?

Subhadip

Comments off

The data protection authority of France has fined Google by €100,000 (Rs. 74,64,700 approx.) for inadequate removal of history data and activities